Part 1 of a series on the future of the web

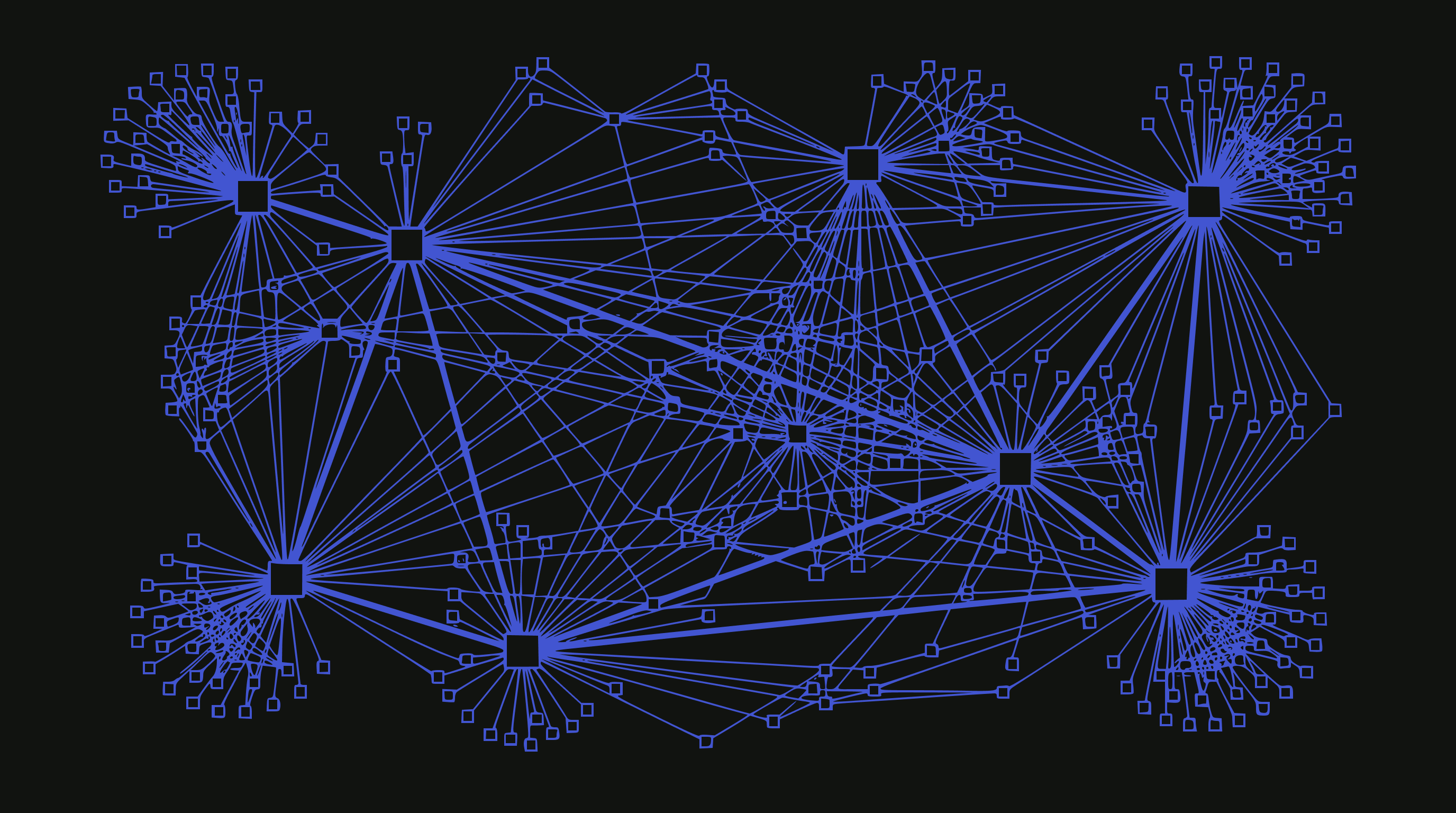

To understand what the website of the future looks like, we should start by zooming out. Way out. Because the web isn’t pages. The web is a living graph of entities.

An entity is a node on that graph, something that represents a real thing: a person, a business, a concept. Each entity connects to other entities through links, citations, semantic similarity, and trust signals. This structure has been remarkably stable for thirty years. What’s changing now isn’t the structure. It’s everything else.

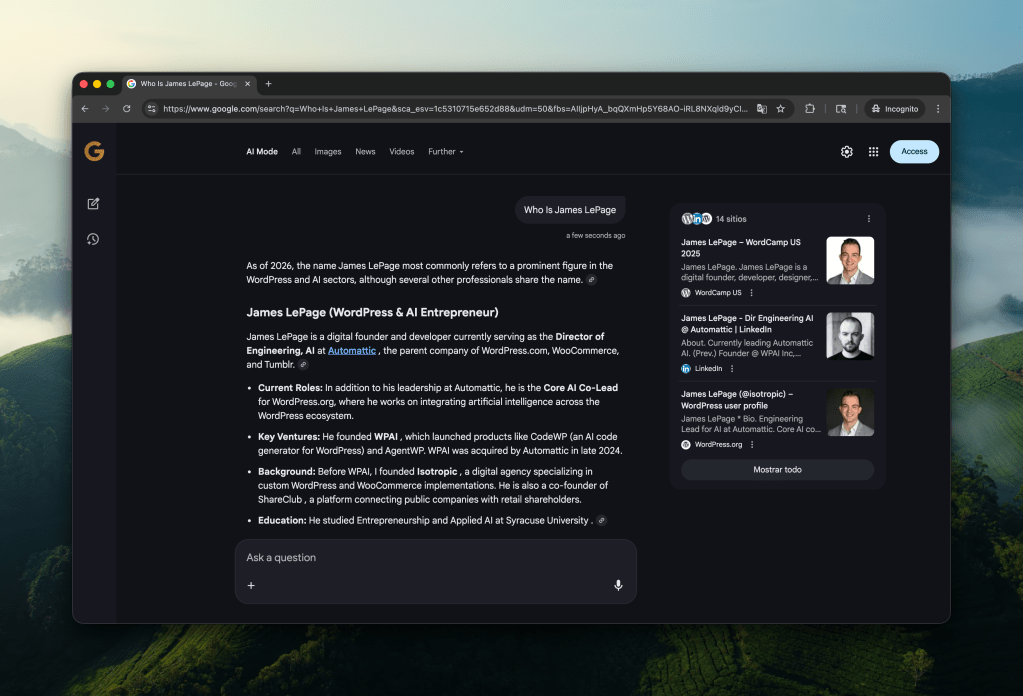

The shift isn’t subtle. Google’s AI Overviews now appear on a significant percentage of searches, and when they do, click-through rates to publisher sites drop by nearly half. ChatGPT processes billions of queries a day, synthesizing answers from content it’s ingested without sending visitors to the source. Perplexity, Claude, Gemini, and a growing list of AI intermediaries are doing the same thing. The pattern is consistent: AI consumes the content, the user (directly) reads less, and the publisher who created that content captures nothing.

This isn’t a temporary disruption. It’s a fundamentally changing Consumption Model (see glossary), and how the web works.

The Web as Entity Graph

Think about what a website actually is. It’s a collection of content about something. That something is the entity, whether it’s a company, a person, or an idea. The website is how that entity shows up digitally. Call this the entity’s “presence”.

Within a presence, there are surfaces. These are the individual addressable locations: pages, posts, products, endpoints. And within those surfaces, there’s content. Text first, usually, with multimedia layered in. Images, videos, audio. But fundamentally, it’s a repository of information about an entity.

This framing matters because it separates the thing from its representation. The entity exists independent of any particular surface. The presence is just one way the entity shows up in the world. When you think about it this way, the website was never the point. The website was a delivery mechanism for content, optimized for a world where humans did the reading and clicking. If humans aren’t the ones reading anymore, the delivery mechanism needs to begin to shift.

One entity may also be a constellation of related things. A company might have a marketing site, a blog, a documentation site, an ecommerce store, maybe some subdomains. All of these are part of the same entity’s presence on the web. They link to each other, they reinforce each other, they build authority together.

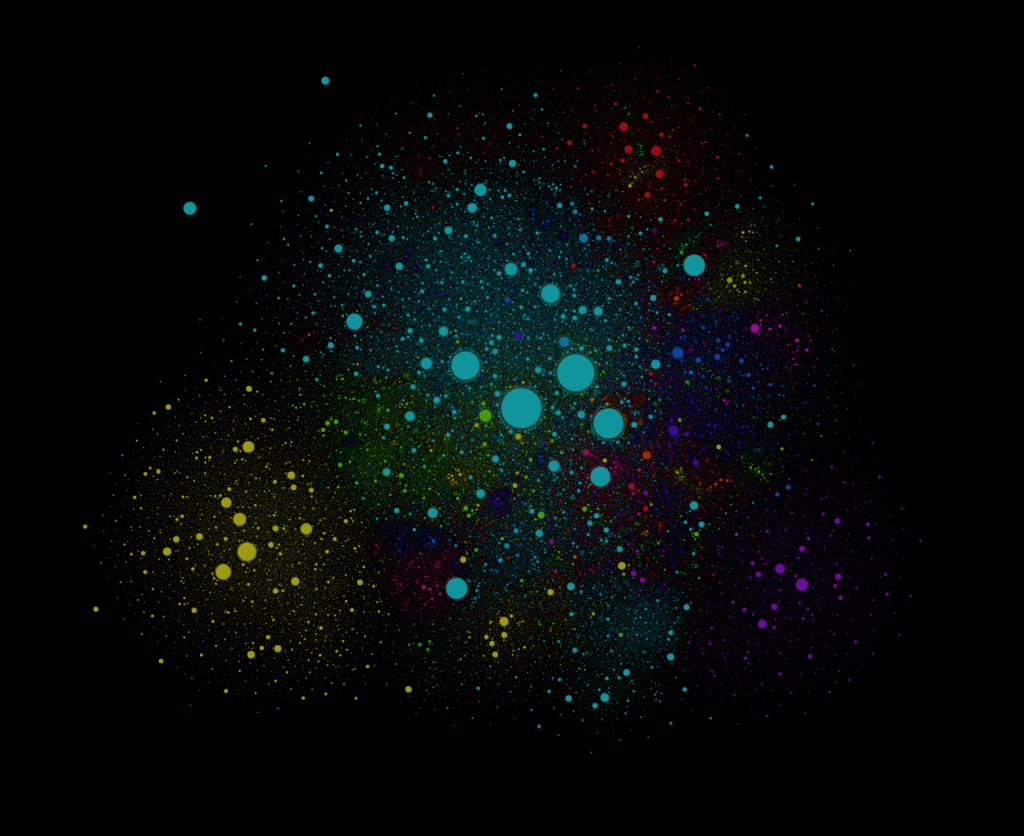

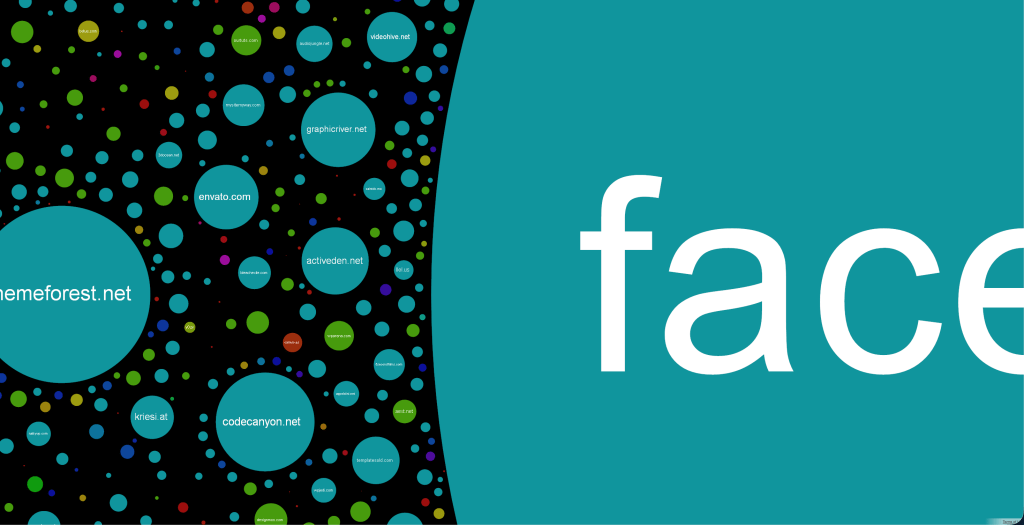

If you want to see what this actually looks like when you zoom out, check out The Internet Map. It’s a visualization of this living web. Billions of entities, trillions of individual pages, all deeply interconnected.

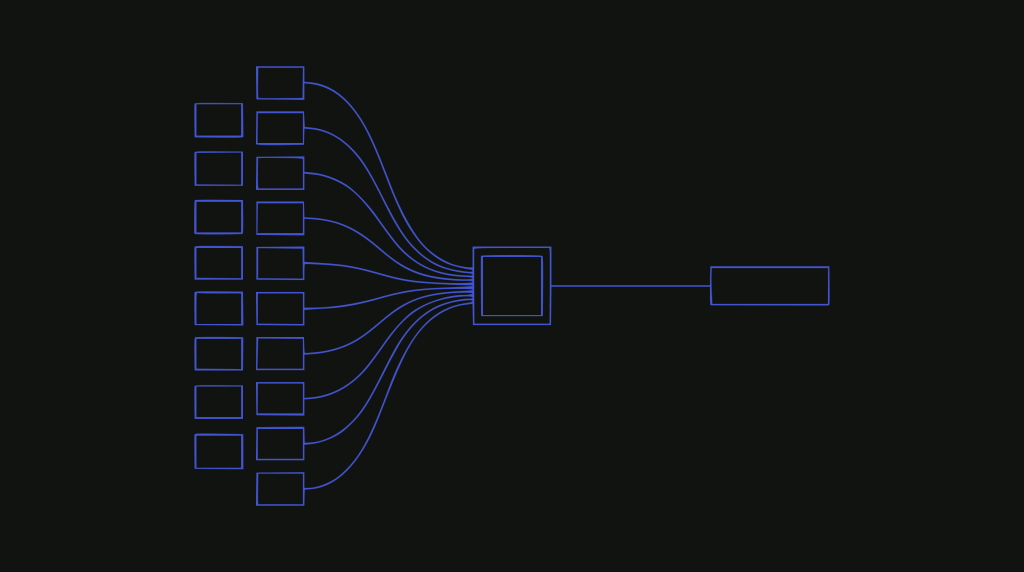

How the Web Worked

The traditional Interaction Model was synchronous and static. A person would go into this connected web looking for information. They knew that content existed somewhere, they knew it was probably authoritative (business card/shop window has URL -> that’s probably their site, things like this), and they would navigate to it, either directly, or through search, or by following links. Then they would read the content, consume it in real time, and synthesize the answer in their own head.

The atomic unit of value creation on the web is intent fulfillment, and that unit of value capture has historically been the conversion.

To me, a conversion doesn’t just mean a purchase. It might be answering a question. It might be reaching a decision. It might be booking a service. But the pattern is the same: arrive with a goal, interact with content that the entity(s) provides, leave with that goal accomplished.

Over the years, this model has been incredibly durable. Even as walled gardens emerged, even as Facebook and Instagram and Twitter created their own enclosed ecosystems with pages for people and businesses, the open web persisted. Those platforms never fully replaced the need for an owned presence. Entities still needed their own node on the graph, something they controlled, something canonical.

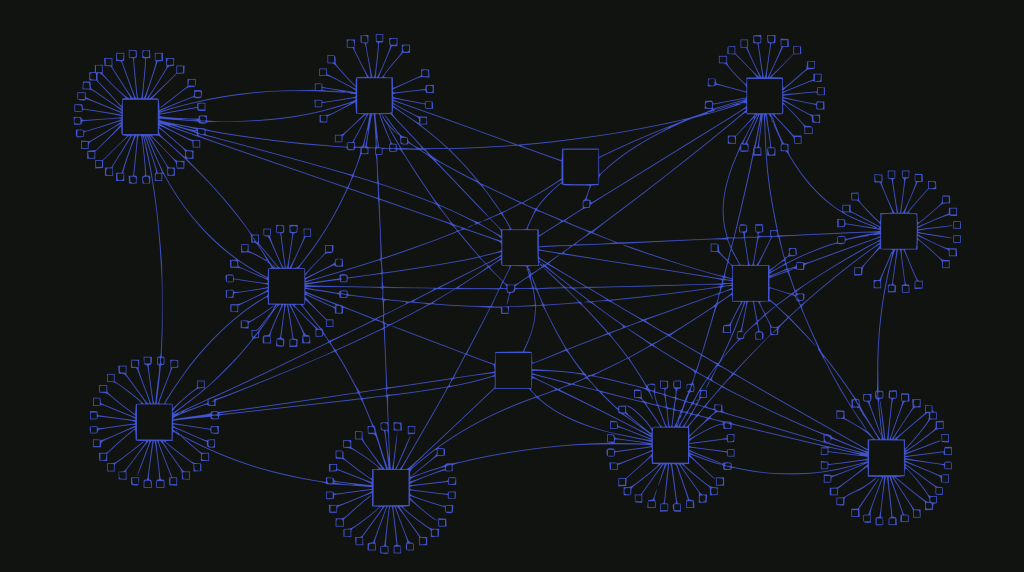

Everything Is Shifting at Once

What makes this moment disorienting to some, but also exciting, is that multiple systems are changing simultaneously. It’s not just consumption models or discovery mechanisms or economics of the internet. It’s all of them, at the same time, and they’re interconnected.

The consumption model used to be straightforward. A user goes to a surface, consumes content directly, and synthesizes the information themselves.

Now an AI intermediary does the synthesis across dozens of surfaces and delivers the answer directly. The user can get what they need (achieve that conversion) without directly visiting the source anymore.

The Discovery Model followed a similar arc. Search engines ranked surfaces, users clicked through results, and the ranking itself was the scarce resource publishers competed for. But when agents can traverse the graph autonomously and synthesis replaces rankings, what does it mean to be “found”? Discovery becomes less about where you rank and more about whether your content gets included in (and influences) the synthesis at all.

The trust model is inverting. Authority used to be established through links, domain age, and human authorship signals. Search engines got decent at detecting these. But in a world of AI-generated content and agent-mediated interactions, those signals become easier to fake and harder to verify. New trust mechanisms are starting to emerge: attestation, provenance tracking, verified entity claims.

The representation model is evolving from static to adaptive. Today, a website presents the same information to every visitor. Maybe there’s some personalization around logged-in users, but the core content is fixed.

In an AI-mediated world, representation could become genuinely dynamic: different information surfaced to different visitors based on who’s asking and why.

Personalization no longer being a nice to have, but a hard requirement to service this agentic web. More on that later in the series.

Shifting Economic Models, Too

All of these shifts matter, but the Economic Model is the one that’s actually breaking.

For media, the traditional web ran on a simple exchange: publishers created content, users visited to read it, and that attention could be monetized through ads or converted into transactions. Discovery drove attention, attention drove revenue. The economics of content creation depended on this chain.

AI synthesis breaks that chain. When an intermediary reads your content and delivers the answer without the user ever visiting, you’ve provided value but captured very little of of it. The synthesis is built on your work, but the transaction happens elsewhere.

This isn’t hypothetical. Major publishers are reporting year-over-year traffic declines of 20% or more from search. 1500+ organizations that have joined the RSL Collective are trying to establish licensing frameworks precisely because the current model isn’t working. Cloudflare is now blocking AI crawlers by default and experimenting with pay-per-crawl mechanisms because publishers demanded it.

The people creating the content that makes AI useful are not being compensated for that contribution. And if that doesn’t change, it discourages the creation of it, and the whole system degrades.

Recently I’ve been saying: AI doesn’t (yet) have robots that fly around and interview people, report on the the news, etc. No matter how impressive the systems are, they still and will depend on publishers and creators.

What Comes Next

The next post digs into the economics specifically: the current model, and how it’s shipping, what a solution might look like, and why this is actually solvable with proper coordination. The music industry went through a similar evolution when streaming unbundled albums. They figured it out. The web can too.

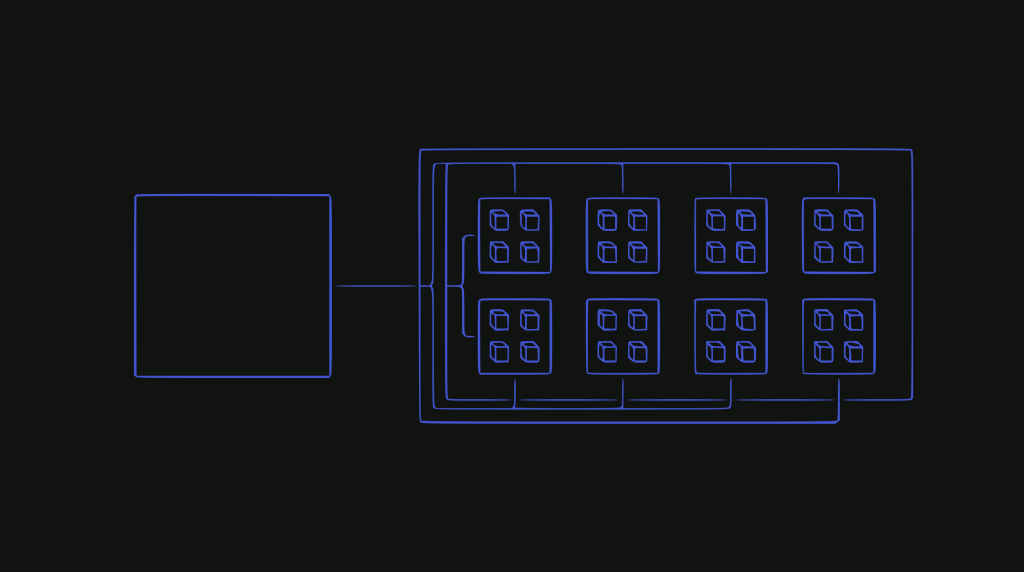

After that, the series explores what happens when AI systems move from being intermediaries, things that synthesize and present information, to being agents, things that take action on behalf of users. That shift changes everything about how entities need to represent themselves online.

And eventually, there’s the question of what this means for WordPress specifically. Because if the web is becoming a graph of entities represented by adaptive agents, the publishing platform that powers 43% of that web needs to be ready.

The stakes are real. Get this wrong, and the open web fragments into walled gardens where only the largest players can afford to participate. Get it right, and we build something better than what exists now: a web where creators are compensated fairly, where users get better answers, and where AI makes everyone more capable rather than just extracting value from the middle.

In addition to democratizing publishing, we can take it a step further. Democratizing representation.